mi-zo

multi-information for camera control in 3D scenes with multiple objects

Paper: https://arxiv.org/abs/2512.24826

Project: https://mi-zo.github.io/mi-zo/

Citation: see end of page.

MI-ZO is a method to improve the performance of cross-modal systems trained on 2D visual inputs when reasoning over 3D scenes. A 3D scene can be converted into a sequence of 2D viewpoints for a vision-language model (VLM) - but the dimensional shift can lead to errors on assessing objects in the scene. Our framework consists of a controller that uses outputs from a novel information theoretic measurement method over the multimodal inputs to identify optimal camera viewpoints.

The motivating application is planetary science: when generating or analysing 3D reconstructions of Mars, analysis depends on resolving colours and fine-grained surface details. Matching a description to a scene becomes harder when it refers to differences between similar objects such as boulders in the same outcrop.

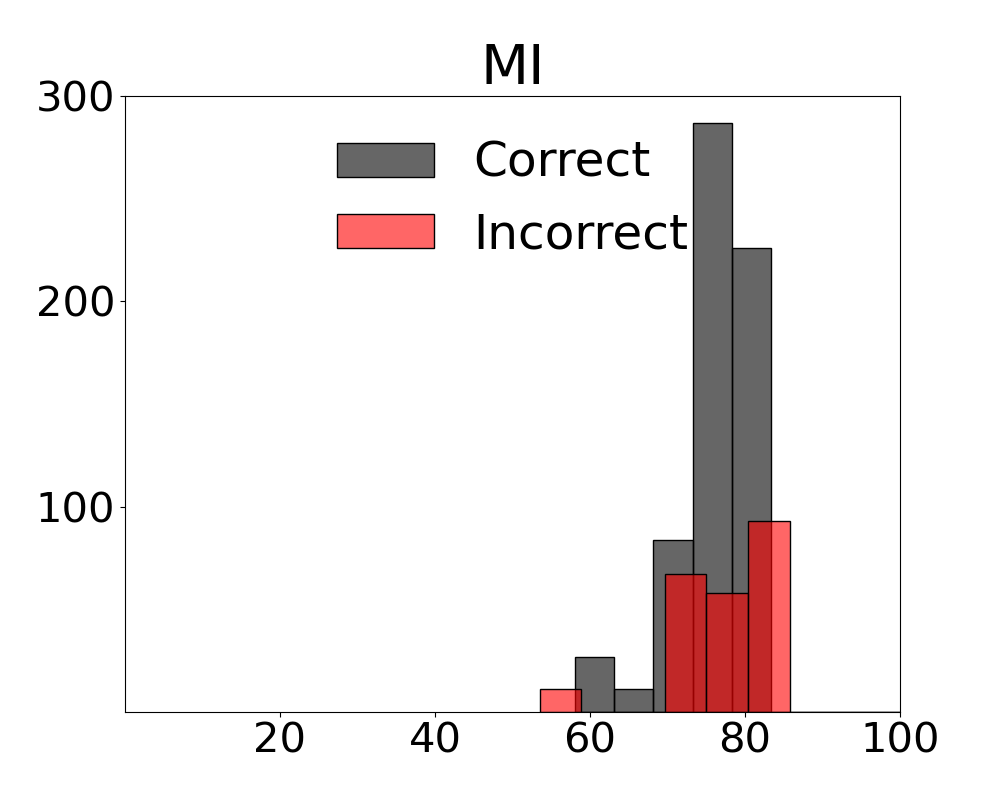

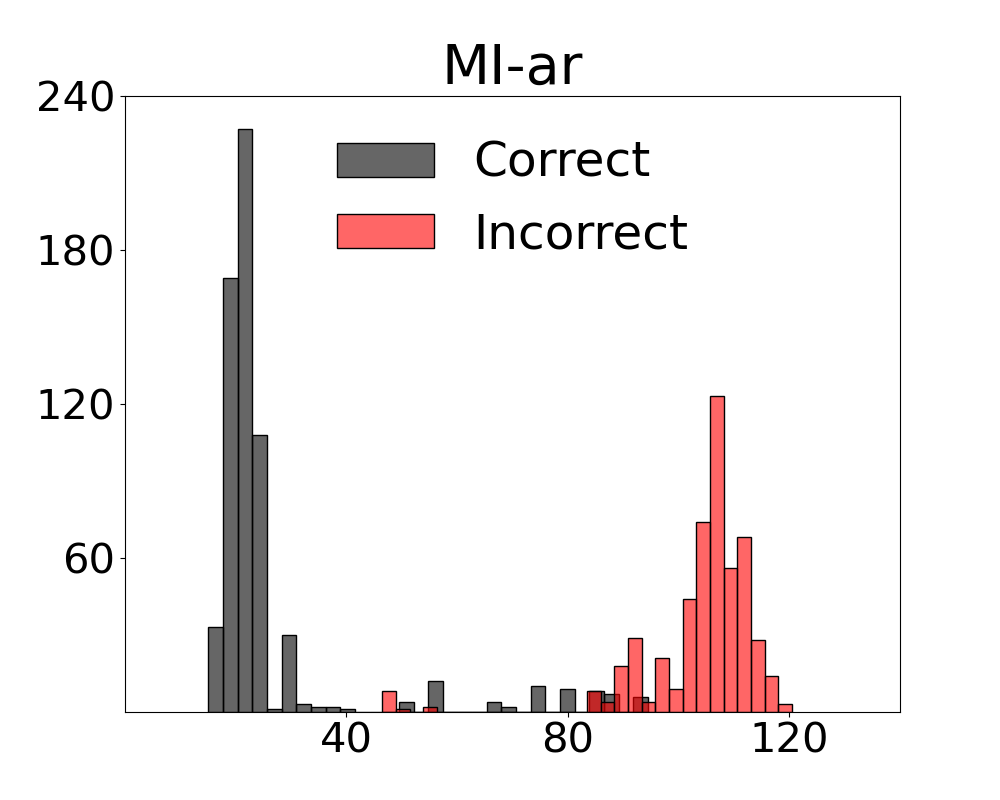

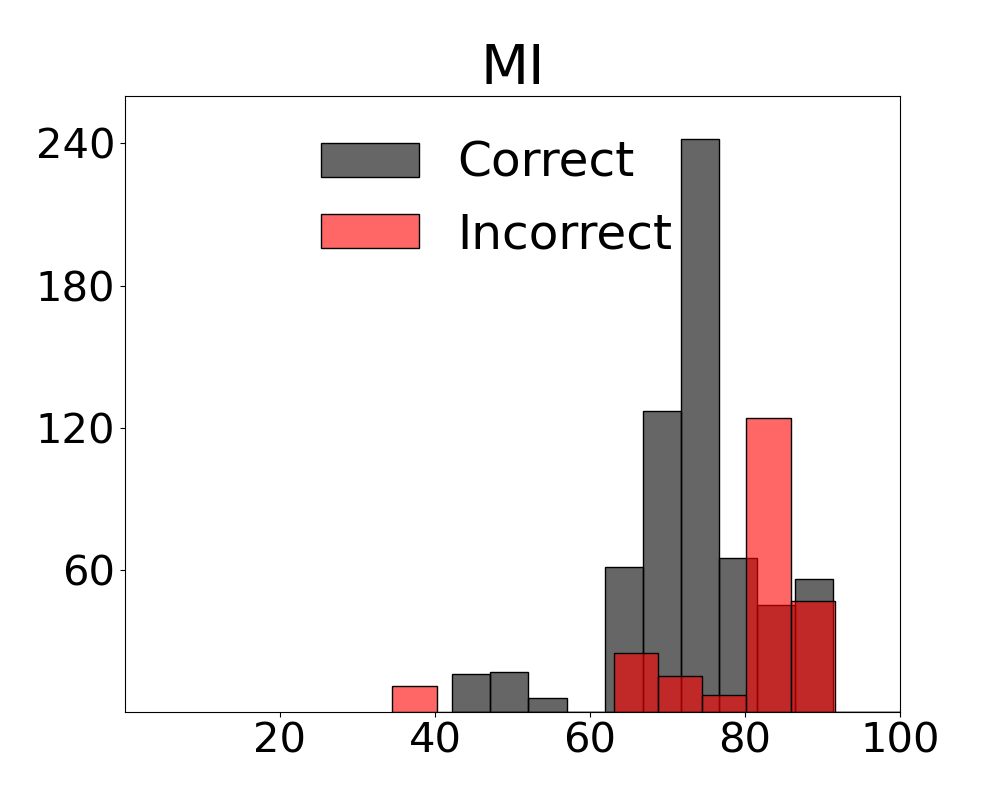

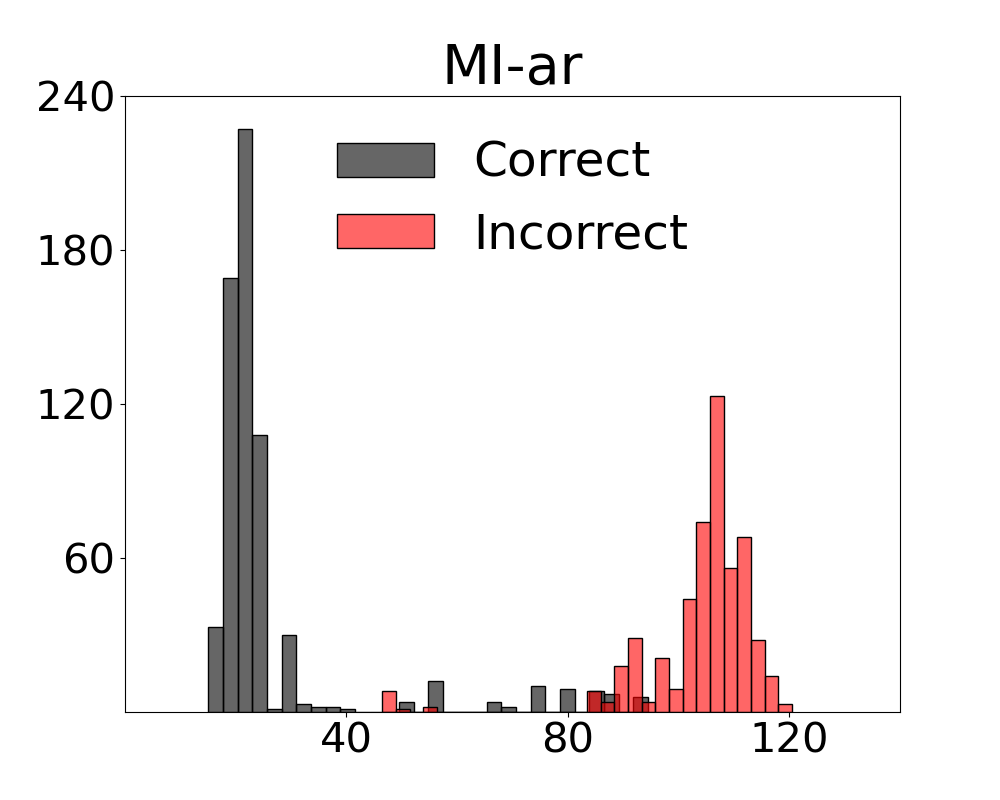

We assume the number of mistakes made by VLMs is related to scene complexity and provide a diagnostic called UC-3DS-MI with uniform and complex scenes to demonstrate how complexity and VLM errors are related. Our MI-ZO measurement method distributes scores in relation to the correctness of a system’s responses.

GO-LED-OL

GH-LED

Our method combines MI-ZO with an in-scene camera controller that predicts an optimal sequence of 2D viewpoints along the x-, y-, and z-axes that is most likely to return an accurate assessment by the VLM. A measurement round is followed by a correction round with controller-predicted actions. In contrast to expensive fine-tuning or training an adapter, our controller improves model performance after a couple of demonstrations - and so is tailored to settings with limited data.

On our GeoProperties-3DS benchmark, balanced error rate drops by 19 points. We also introduce two additional benchmarks focusing on feature identification and object occlusions. Further details are available on the MI-ZO project page.

Please cite our work if you find it useful:

Armitage, Jason and Rico Sennrich. Video and Language Alignment in 2D Systems for 3D Multi-object Scenes with Multi-Information Derivative-Free Control. arXiv preprint arXiv:2512.24826 (2025).

BibTeX:

@misc{armitage2025mizo,

title = {Video and Language Alignment in 2D Systems for 3D Multi-object Scenes with Multi-Information Derivative-Free Control},

author = {Armitage, Jason and Sennrich, Rico},

year = {2025},

eprint = {2512.24826},

archivePrefix= {arXiv},

primaryClass = {cs.CV},

doi = {10.48550/arXiv.2512.24826},

note={Accepted for publication at the IEEE/CVF Winter Conference on Applications of Computer Vision 2026}

}

.